The Definitive Guide to Investing in Agentic AI

Building an Agentic Stack

The more I speak with investors, the clearer it becomes that there are only two schools of thought on where the current market rally is headed.

We are still early, and there is a long way to go.

We are near the top, on the cusp of a dot-com style collapse.

While no one can be certain, it’s probably somewhere in the middle. But if you are in the it’s a bubble bucket, let me start by highlighting the mistake I made at the end of 2023 when I published “The Problem with the Magnificent 7”.

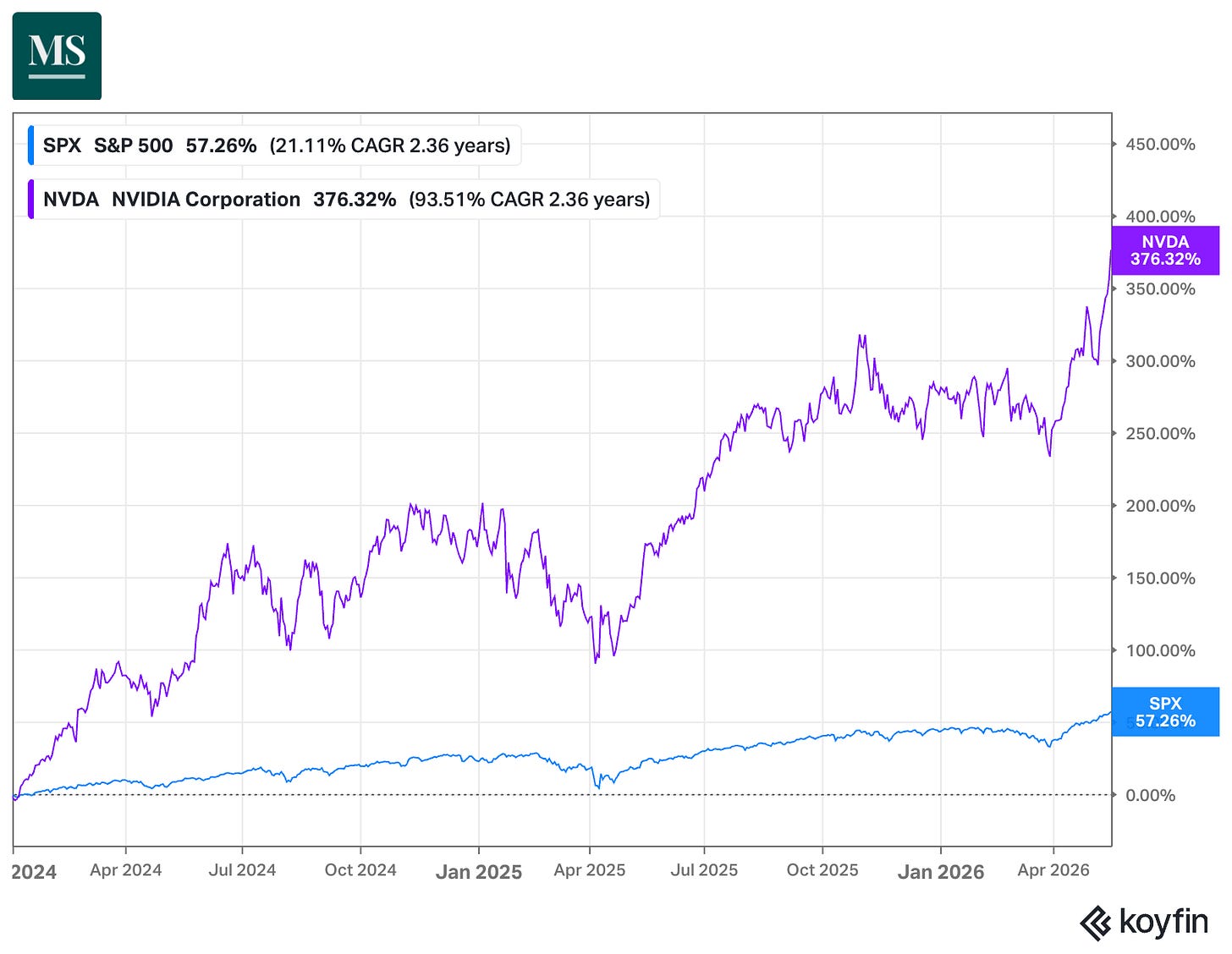

Nvidia had ended the year with a 245% return and became one of the top 5 most valuable companies in the world. Nvidia’s Price/Sales had doubled from ~11x in 2022 to 22x in 2023. We were naturally skeptical of how much more the company could grow from these rich valuations.

Turns out, we underestimated just how massive the AI buildout would be. While we were busy looking at Nvidia's P/S and thinking it was expensive, the company's actual sales were about to explode. Revenue jumped from $61 billion in FY2024 to $130 billion in FY2025 and $216 billion in FY2026. Every big tech company was suddenly in an arms race to buy as many GPUs as they could get their hands on, and Nvidia was the only one selling. So even though the stock looked expensive at the end of 2023, earnings grew so fast that we missed the rally.

It took me quite a while to change my mind about AI and to understand that it’s a structural, not a cyclical, change. It’s often said that the mark of a good investor is the ability to change his mind when presented with new information.

So, before we get into the Agentic stack, here are 3 bits of information on how much AI is going to change the world in the next few years:

Market Sentiment dives deep into the bottlenecks of the AI world. Join 45,500 other investors to make sure you don’t miss our next briefing.

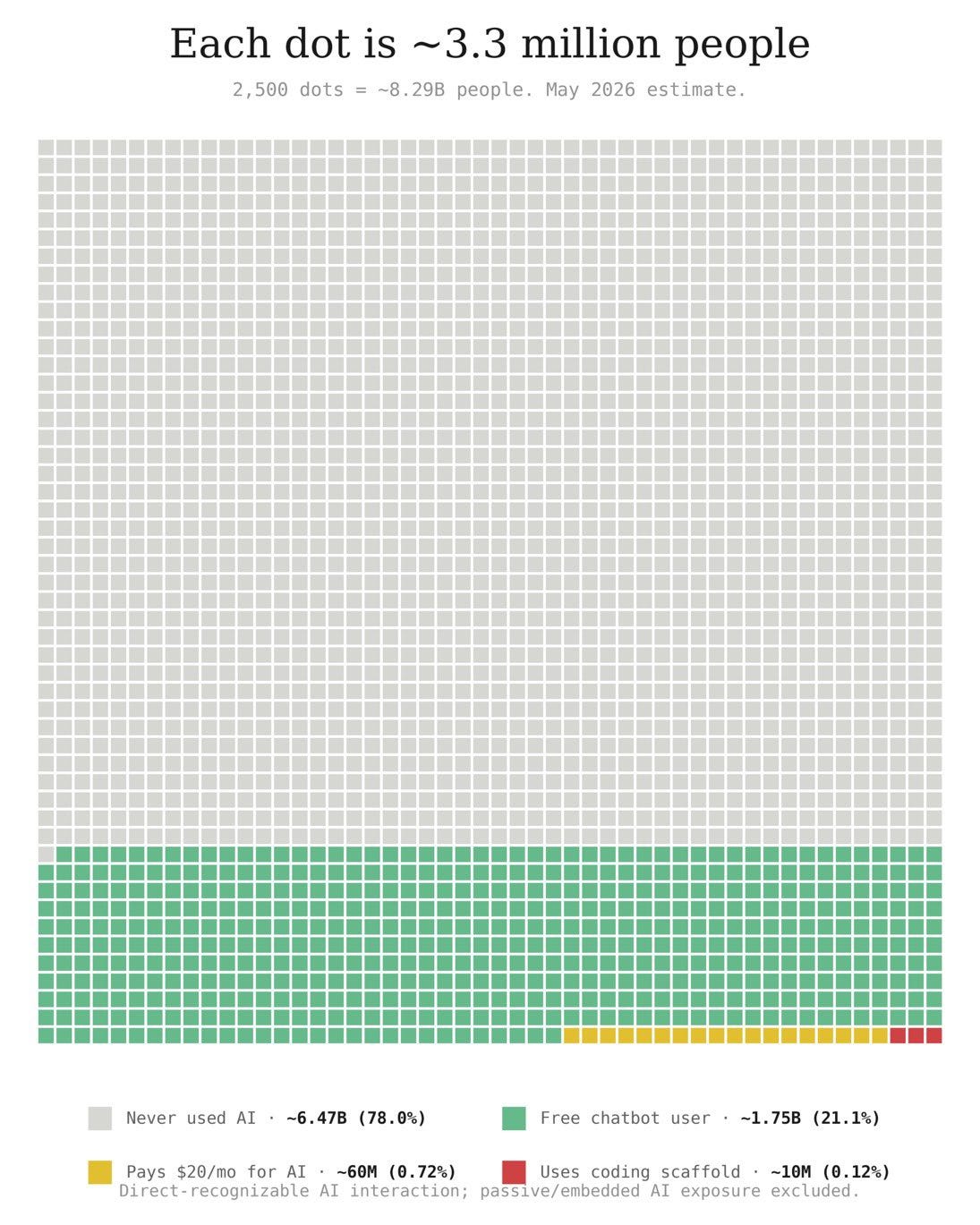

1. Outside of the AI hype, we are quite a bit early

As per the latest estimates, ~78% of humanity has never used any AI tool. So while it feels like everyone is running an agent on tech Twitter, reality is far different.

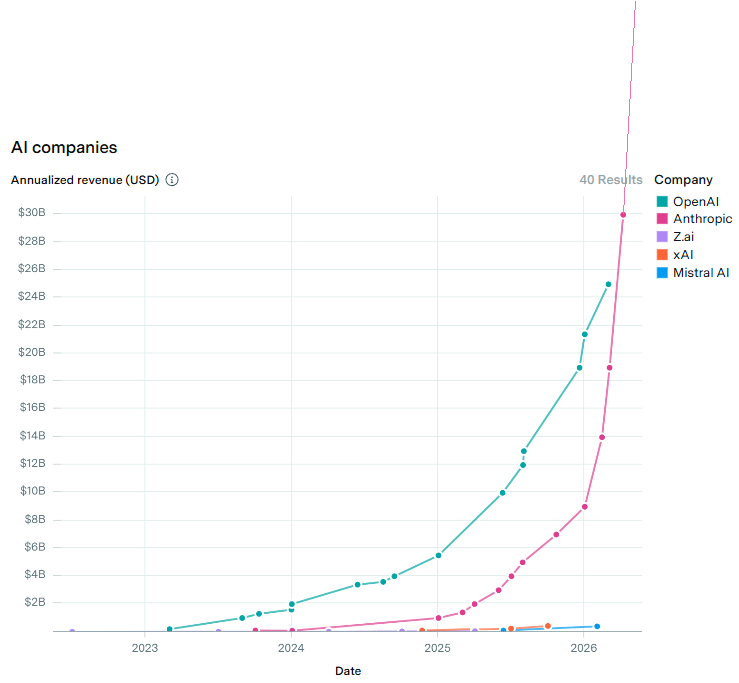

2. Even with this, foundational company revenues are exploding

3. We haven’t even considered physical agents

Figure just had a 24-hour live stream featuring an autonomous humanoid robot picking and sorting packages.

Agents are going mainstream

Most AI conversations right now are about coding assistants (and that is what is driving the revenue surge for OpenAI and Anthropic), but that’s really only useful for the ~25 million developers out there.

Agents are going after something much bigger. The 1.4 billion knowledge workers: who spend their days buried in email, spreadsheets, and jumping between a dozen different tools. And once agents start handling even a slice of that work, AI usage goes through the roof, which means a lot more compute, a lot more GPUs, and a lot more demand flowing right back to the semiconductor stack.

On May 7, Anthropic announced that Claude is now generally available inside Excel, PowerPoint, and Word, with Outlook in public beta.

Up until a few months ago, using AI in your day job meant writing prompts, hitting an API, or running tools like Cursor. With this release, AI will now operate the 3 apps that 1.4 billion knowledge workers use the most. Microsoft has its own version of this, called Copilot. Copilot has ~20 million paying users, and they rolled out their latest Copilot and Office 365 E7 bundle on May 1st.

Watch the demo to appreciate what this looks like in practice. This is an inflection point for AI usage in everyday tasks.

Agentic AI: the inflection point

A developer running a focused 30-minute coding session on Cursor uses around 50K to 200K tokens (unit of compute). Compare that to a financial analyst asking Claude to reconcile a 200-row file against a pivot table, draft the email summary, and prep three slides for Monday’s review.

That single workflow uses 800K to 2M tokens.

Now multiply that by 1.4 billion office workers. Microsoft has already said that over ~80% of Fortune 500 companies have rolled out Copilot to part of their workforce. The load on enterprise systems caused by Agentic AI will make chatbots' token consumption seem trivial.

And the infrastructure to handle all this simply isn’t there yet. A chatbot session is quick, GPU-heavy, and low-context. An agentic session is the opposite. It runs for hours, constantly does things, and pulls on CPUs (to orchestrate actions), memory (for more context), and GPUs more evenly. As we wrote in ‘Notes on Compute’ last week, the server CPU to GPU ratio has already shifted from 1:12 in old training setups to 1:2 in agentic inference builds.

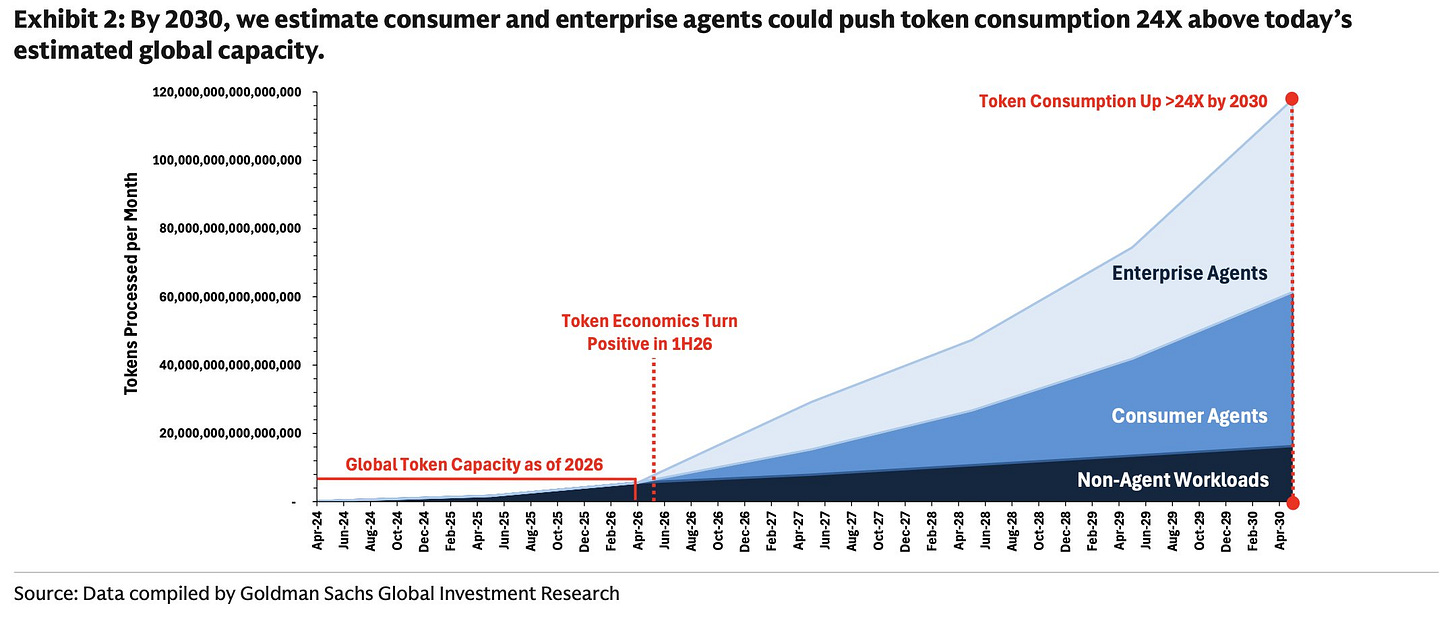

An explosion in token consumption

Goldman Sachs Global Investment Research now models that consumer and enterprise agents will push monthly token consumption to roughly 24× current global capacity by 2030. The crucial line on their chart is the dashed marker labeled ‘Token Economics Turn Positive in 1H26’, the moment any operator can deploy an agent whose output value exceeds its compute cost. That is when every company in the world will need to implement AI agents to compete.

Right now, agentic use cases are still more hobbyist tinkering than full-fledged commercial deployment.

Once an enterprise agent can operate continuously, 24/7, handling complex tasks and recoup its costs, adoption accelerates rapidly, probably within months rather than years.

Mapping the stack

Most readers already have meaningful exposure to popular names such as Nvidia, AMD, Intel, TSMC, Samsung, and SK Hynix, either directly or through our Advanced Packaging basket.

The Agentic Basket below is deliberately tilted toward the under-covered stocks, enabling agentic AI. These are the stocks with a higher chance of re-rating, as the broader street discovers them.

Morgan Stanley’s exposure map is the best reference we’ve seen for the full agentic stack. We’ll walk through each segment, then assemble the basket.